Programming Diary #42: Establishing a Steem-Based Data Structure

TL;DR: (1.) A new library was published for building linked lists with Steem's custom_json transactions; (2.) There have been significant updates to the post selection algorithm that Thoth uses.

It's hard to believe that it's been six months since Programming Diary #41, but the blockchain doesn't lie. This is not because I haven't been doing any programming, but rather because it's more fun to write code than to write about writing code. When I get free time, it's hard to motivate myself to write articles, instead of working on one of my coding projects.

Recently, there have been some updates that I thought I should post about, though.

With six months of a gap, there's a lot that I won't be able to write about now, but I want to post about two substantial updates: A new library for creating doubly-linked linked lists via custom_json transactions on the Steem blockchain and about Thoth's updated selection algorithm. So, let's get to it.

Linked Lists on Steem

This was actually @cmp2020's idea. A week or two ago, we were talking about the possibility of using Steem to gamify wildlife protection and the difficulty of scanning custom_json transactions for updates, and he said something like, "Just put it in a linked list." This seems like a pretty good idea.

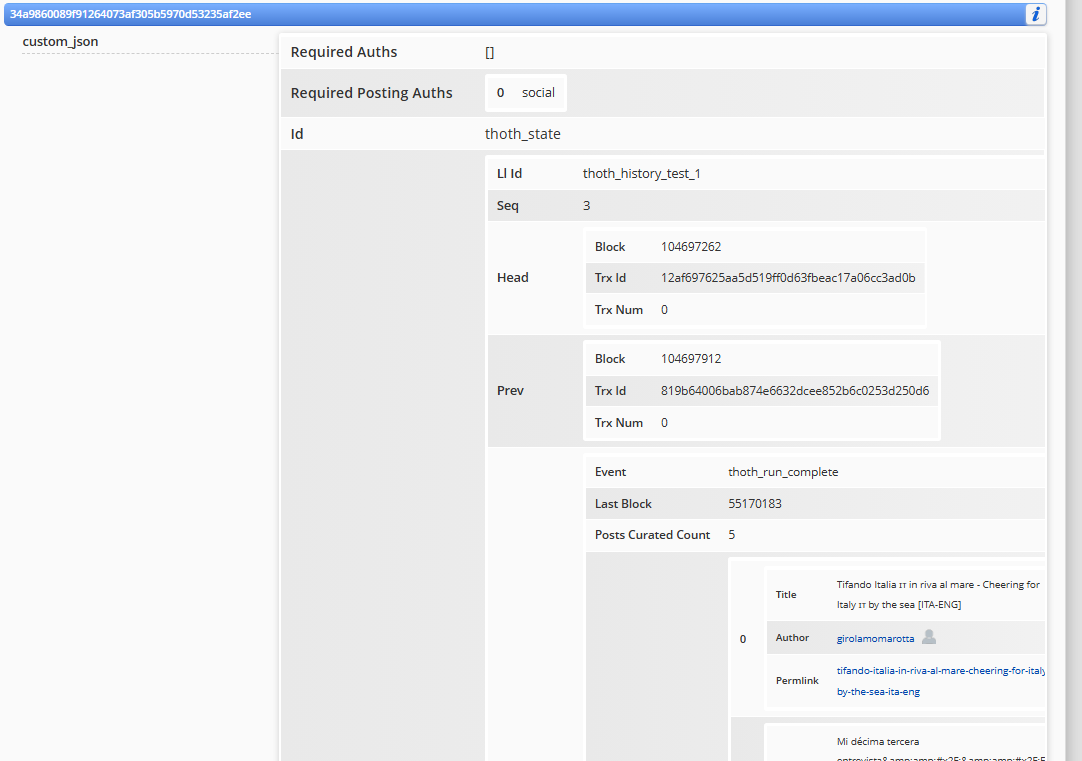

Image from Steem World

Fortunately, I had a weird work schedule this week, so I was able to use my mornings to work with Gemini, Claude, and Cline as "pair programmers" and get started playing with the concept. As a result, I put together a first pass at a linkedListOnSteem library and get Thoth plugged into it for storing its curation history in a linked list on the blockchain.

The first draft was up in no time, but then I realized that there were absolutely no protections against near-simultaneous updates from multiple processes or accounts. So, the first protection I put in was the ability to ignore nodes that were posted by other Steem accounts. This only solved part of the problem, however, since a single account could still fork the list by posting from multiple processes at the same time. So, I consulted with the AIs, and we came up with Optimistic Concurrency Control (OCC) to guard against concurrent updates from the same account. After implementing safe_append() and safe_delete() methods, the library will now broadcast the transaction and then check to see if other broadcasts are in conflict. If so, the later node will be deleted and the broadcast will be retried.

This all went through several iterations of imagining how things could break, creating tests, executing them, and revising code. I very much doubt if I have imagined every single way that things could break, so the only use case I would (mostly) trust right now is when the list is written by a single account using one process at a time.

That was far enough that I could start testing with Thoth, though, so the last iteration was to package it in github so that it can be downloaded into any virtual environment. That's where the github repo stands at this moment.

Next-up, Cline, Gemini, and I updated Thoth to make use of it (in its test branch). Today, Thoth used that library to store its test curations in this list [ 0, 1, 2, 3 ].

You can start at the tail (node 3) and step through the "prev" pointers to get back to the HEAD node, and you can also build the "next" pointers in memory, as you go. That's what linkedListOnSteem does.

Accordingly, anyone can now use that library to make linked lists out of any JSON payload and store them on the blockchain using custom_json transactions.

Thoth's new post selection algorithm

Coding Changes

I guess the first time I heard of Cline was about a month ago, I wanted to try it out immediately. So, that night I plugged it in to vscode and let 'er rip. I told Cline to look at the Thoth code, diagnose the most important change, and to implement a fix. And that's where today's big Thoth update comes from.

Cline decided that Thoth needed to make its post selections through the use of scoring instead of rigid filtering. We eventually compromised and implemented a hybrid method. Thoth's previous curation involved 2-tiers of curation:

- Screening based on author, post, and follower-network characteristics

- AI/LLM evaluation

Cline and I added a new tier in between, which was based on scoring. It now goes like this:

- Screening based on [fewer] author, post, and follower-network characteristics

- Score-based evaluation, based on author, content, and engagement

- AI/LLM evaluation

Since I was working on the selection algorithm, anyway, I also took the opportunity to expand on this suggestion from @moecki:

Perhaps it could be avoided to include an author with multiple posts (per post or per day).

So, the Thoth operator can now limit how many times a single author appears per post, per day, or per week, and the operator can also limit how many times the same post will be permitted to appear in a 30-day period.

Another change that the AIs and I implemented was to add some summary statistics at the end of each run so the operator can see how many posts are being impacted by each of the different levels of evaluation. Until now, I had been "flying blind" with regards to total numbers. Now, I can get a sense of how selective Thoth is being.

For example, here are some numbers from this morning's run:

Total Posts Evaluated: 1478

Total Posts Accepted for Curation: 17

Total Posts Rejected: 1461

Acceptance Rate: 1.15%

We can already see that Thoth let 17 posts through to the LLM, and we know that only 5 were posted. This means that the LLM rejected another 12. Of posts that didn't make it as far as the LLM, here are some additional numbers:

Rejected by Rule: 1362

Rejected by Score Threshold: 99

And here is a summary of the scoring evaluation:

Accepted by Tier:

- Good: 7

- Fair: 7

- Whitelisted: 2

- Excellent: 1

I don't have much confidence in the "Good"/"Fair"/"Excellent" assessments, but it just reflects the range of scores in the scoring algorithm that the AIs and I came up with.

Model Changes

In addition to the coding changes, the LLM model availability went through some pretty big shifts, too. The free-tier model from ArliAI changed from Gemma to Qwen-3.5-27B, and Google scared me half to death in the paid tier, when I realized the massive size of the financial impact that might result from a leaked API key.

ArliAI : Gemma and Qwen

For a while, I had been using ArliAI for Thoth testing with the @social account (not mine, but the publicly available private key is convenient for testing). At some point, the previous free tier model (Gemma) stopped replying, however, and after a while I noticed that it had been replaced by Qwen-3.5-27B.

I reconfigured the test branch to use Qwen, but curation runs were taking forever. It turns out that Qwen is much more choosy about what posts it will let through, so that it's basically unusable without rewriting the curation prompt. Something I haven't taken the time to do yet.

So, as of now, ArliAI (free tier) seems to be off the table until I revisit the curation prompts.

Google/Gemini/Gemma

Meanwhile, for the @thoth.test account, I'd been using the Google paid tier for about $2 per month. Then, one day, someone posted on Twitter that their Gemini API key had leaked and someone used it to rack up multiple thousands of dollars in activity.

I immediately went to Google to set limits on my account and learned that it's not possible. If my paid API key leaked there would have been no way to prevent someone from doing the same thing. So, I shut down the billing account and switched back to the free one.

Around the same time, Google also made the gemini-3.1-flash-lite-preview model available for the free tier, so it didn't feel like much of a loss. However, I was noticing that Thoth runs on the @thoth.test account also started taking longer. It turns out that gemini-3.1-flash-lite-preview is also a very choosy model. For example, one day it rejected 139 posts and another day it rejected 216 of them.

Now, it would be nice if everyone writing on the blockchain wrote like Ernest Hemmingway, but that's not the case. A tool like Thoth has to be accessible for the average Steem user, so those rejection rates are just too high. Also, I looked at some of the rejected posts and I just didn't agree with the decisions.

So, it looks like I need to revisit the curation prompts for Gemini, too. Meanwhile, I switched back to Gemma from Google's free tier. IMO, Gemma is a little bit too permissive, but I can try to compensate for that with screening & scoring. When the LLM is too restrictive, there's nothing to be done.

Conclusion

There have, of course, been many other updates that I'm not mentioning here. I have been trying to get better about versioning and maintaining a changelog, though. My last AI prompts when coding now are almost always like this:

- "Please update the version, the changelog, and the README file to reflect the changes from this session"

- "Please give me a github commit message that accurately describes the changes from this session."

So, if you want to see more details about the changes in these projects, I invite you to check out the changelogs and git commit messages.

When it reaches maturity, I think there are many potential uses for the linkedListOnSteem library, and I am hoping that we'll see a wider selection of authors from the recent Thoth algorithm changes.

Acknowledgements

I want to express my continued gratitude to the delegators and voters who are supporting Thoth and the authors that it finds.

I also want to thank all of the people who have expressed support for the project in the comments. Of course, the general sentiment towards delegation bots is not fantastic here, so I wasn't sure what to expect when I launched the project. After about a year of operation, though, the feedback has been almost entirely positive.