I'm using large language model artificial intelligence for research and content generation

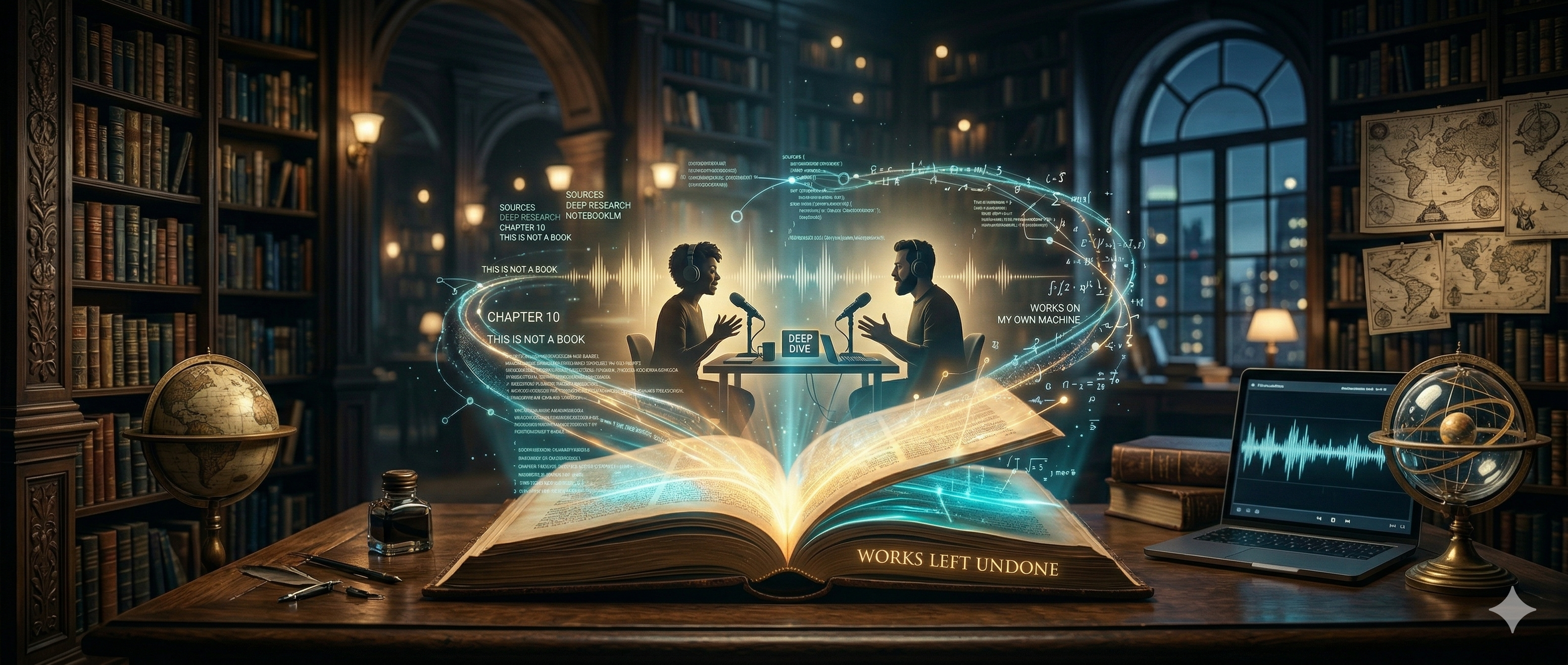

I've conducted part of my research for Open Book using AI technology, specifically LLM tools, including NotebookLM. NotebookLM offers a research tool that finds information based on topics you provide as a prompt, and it features generative tools to produce artifacts based on that research, including "deep dive" audio formatted as an exchange between two podcast hosts in conversation around what gets referred to as the "sources". One notable feature of NotebookLM is that it is designed to generate material using primarily the sources provided to it. In my case, most of those sources come from research through the tool itself, or as copy/pasted threads comprised of prompts by me and responses by other LLM models.

(As an aside, I haven't used the chat feature of NotebookLM much; have you? How does it compare to other models?)

My typical research methodology includes

- generating a list of topics through prompt-response threads and

- follow up online searches, then

- utilizing the "deep research" feature of NotebookLM (contrasted with the default "quick research")

In this way, I gather and produce material as sources for a deep dive audio artifact production. At times, I prompt the audio generation to steer it in a certain direction, while at times I just want to see what the tool will produce as a default.

I've only had to scrap the audio a few times over the course of two dozen or so attempts, but every file includes at least a few audio artifacts. For instance, there are two hosts presenting as a woman's voice, and a man's voice. They banter back and forth, but you'll occasionally hear a third voice reply to one or the other of them, or both! There are also audio glitches; some sound like it's supposed to be a laugh or a brief comment, but it just comes across as mostly a buzzing sound or some crackles. Finally, the audio will completely break down every now and then, and a word or phrase is just missing, as if you're listening on a CD player from the '90s with the shock protection turned off while jogging.

In at least two cases, I ended up splitting the topics into multiple notebooks to try to get a more succinct read of the gathered material.

In any case, however, all of this was rather rushed, and quite rough around the edges—to be honest, probably straight through to the middle!

This has been a learning process, and I am looking forward to a more refined process in the future. But I've still quite enjoy the result throughout this experiment, and I've used the same methodology for topics outside of Open Book as well, just as a new way to learn something.

I'll be presenting the deep dive "episodes" I've generated along with some commentary in a series of posts here. If you're interested in Open Book, or the processes I've used to generate these, you may want to follow along. Please let me know what you think about the material, the process, my take... I'd be glad to consider this with you, and will very much appreciate the feedback.

Here's my most recent generated audio for Open Book. This is based on research for chapter 10 of the first volume in Open Book's trilogy, Works Left Undone. This is from a chapter entitled, "This is Not a Book" from "Works on My Own Machine":

Enjoy! Or don't!

Let me know either way.

I have devised a system I might put into place to denote my use of AI for Steem posts, including a series of icons that characterize different forms of content generation:

- AI-supported

- partially AI-generated

- completely AI-generated

Is there anything else you'd like to know as a consumer of content that should be denoted by a system like this?

Upvoted! Thank you for supporting witness @jswit.

I forgot to include the audio file with the initial publish, but I couldn't figure out a good way to embed it, so I've just posted a link.