Torrix - Self-hosted LLM observability. Every token. Every dollar

Torrix

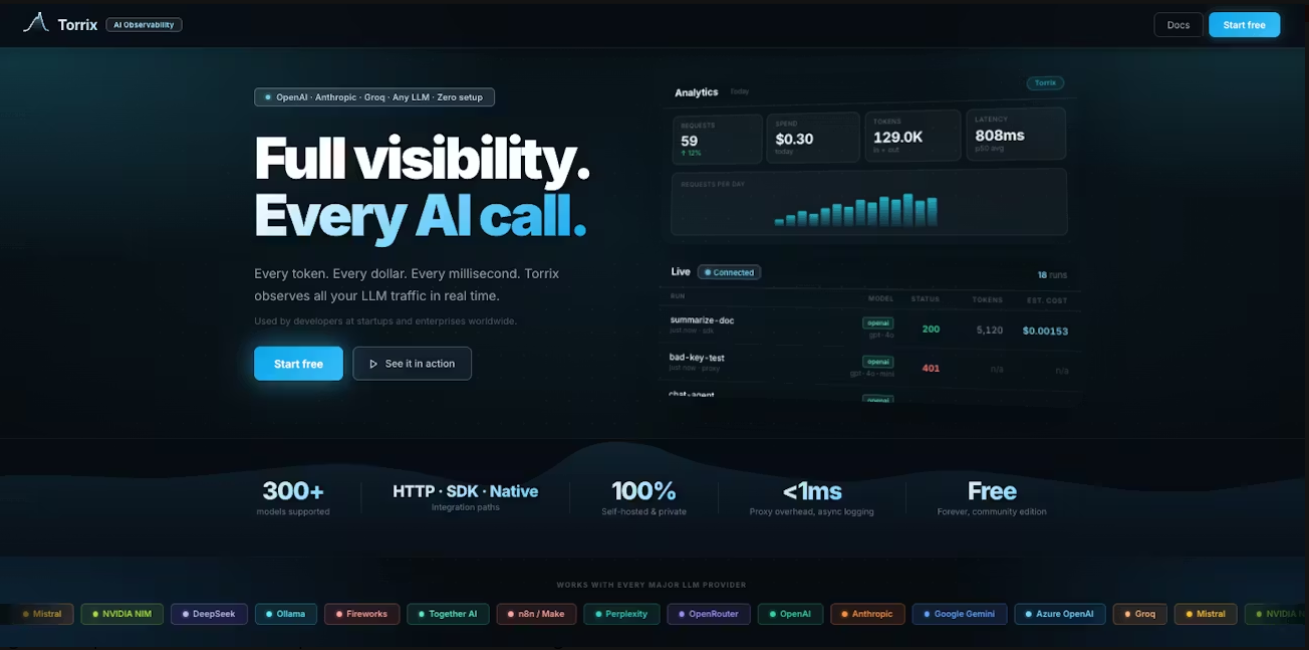

Self-hosted LLM observability. Every token. Every dollar

Screenshots

Hunter's comment

Most LLM observability tools send your prompts to their cloud. Torrix runs on your server. Add 2 lines of Python, or route any HTTP client through the proxy. No code changes needed. Every AI call is logged instantly: tokens, cost, latency, and the full prompt trace. Works with OpenAI, Anthropic, Gemini, Groq, Azure, Mistral, SAP AI Core, n8n, and any HTTP API. Community edition is free forever. Your data never leaves your infrastructure.

Link

This is posted on Steemhunt - A place where you can dig products and earn STEEM.

View on Steemhunt.com

Congratulations!

We have upvoted your post for your contribution within our community.

Thanks again and look forward to seeing your next hunt!

Want to chat? Join us on: