Design Debt & The "Shadow Logic": Why AI Makes Bad Software Architecture Scale Faster

Why does AI make bad software architecture scale faster? AI tools prioritize probabilistic pattern matching over systemic reasoning. While they can generate functional code snippets instantly, they lack "Architectural Intent"—the deep understanding of long-term scalability, cross-region compliance, and maintainable logic. This allows teams to scale massive, complex systems (Shadow Logic) that appear functional in the short term but are structurally fragile, leading to an exponential increase in technical debt.

The "Implementation Mirage": Why Working Code ≠ Good Architecture

The most dangerous thing an AI can give a developer is a program that compiles. In professional software development, "it works" is only the baseline. The real value lies in whether the system can be maintained, audited, and scaled over a five-year lifecycle.

AI creates what is known as the Implementation Mirage. Because generative models can produce high-fidelity frontend components or functional API endpoints in seconds, stakeholders often assume the project is 80% complete. However, beneath the surface, the AI is often "hallucinating" logic paths that ignore:

Concurrency and Race Conditions: AI often writes code that passes a single-user test but fails under high-traffic production loads.

Memory Management: Generative tools frequently rely on the most "common" library patterns from their training data, which are often the most bloated or outdated.

The Lack of Inverse Reasoning: AI can tell you how to build a feature, but it rarely explains why you shouldn't build it in a specific way to avoid breaking downstream dependencies.

Scaling the "Junior-Senior" Paradox

In the modern development landscape, AI allows a junior developer to visually mimic the output of a senior architect. They can generate complex React hooks or Python microservices without understanding the underlying Software Design Patterns required to keep those systems stable.

This leads to a phenomenon where Bad Architecture scales at the speed of light.

Pattern Drift

A developer prompts a solution for "Module A." Later, they prompt a solution for "Module B." Because the AI lacks a holistic "long-term memory" of the entire codebase’s soul, it introduces slightly different architectural patterns for each. This inconsistency is the definition of technical debt.

The "Lava Layer" Anti-Pattern

Every time a developer uses a new prompt to fix a bug in AI-generated code, they add a new "layer" of logic on top of the old one. Soon, the codebase becomes a "geological formation" of code—hardened, unmovable logic that no one dares to refactor because nobody truly understands the original "intent" of the prompt.

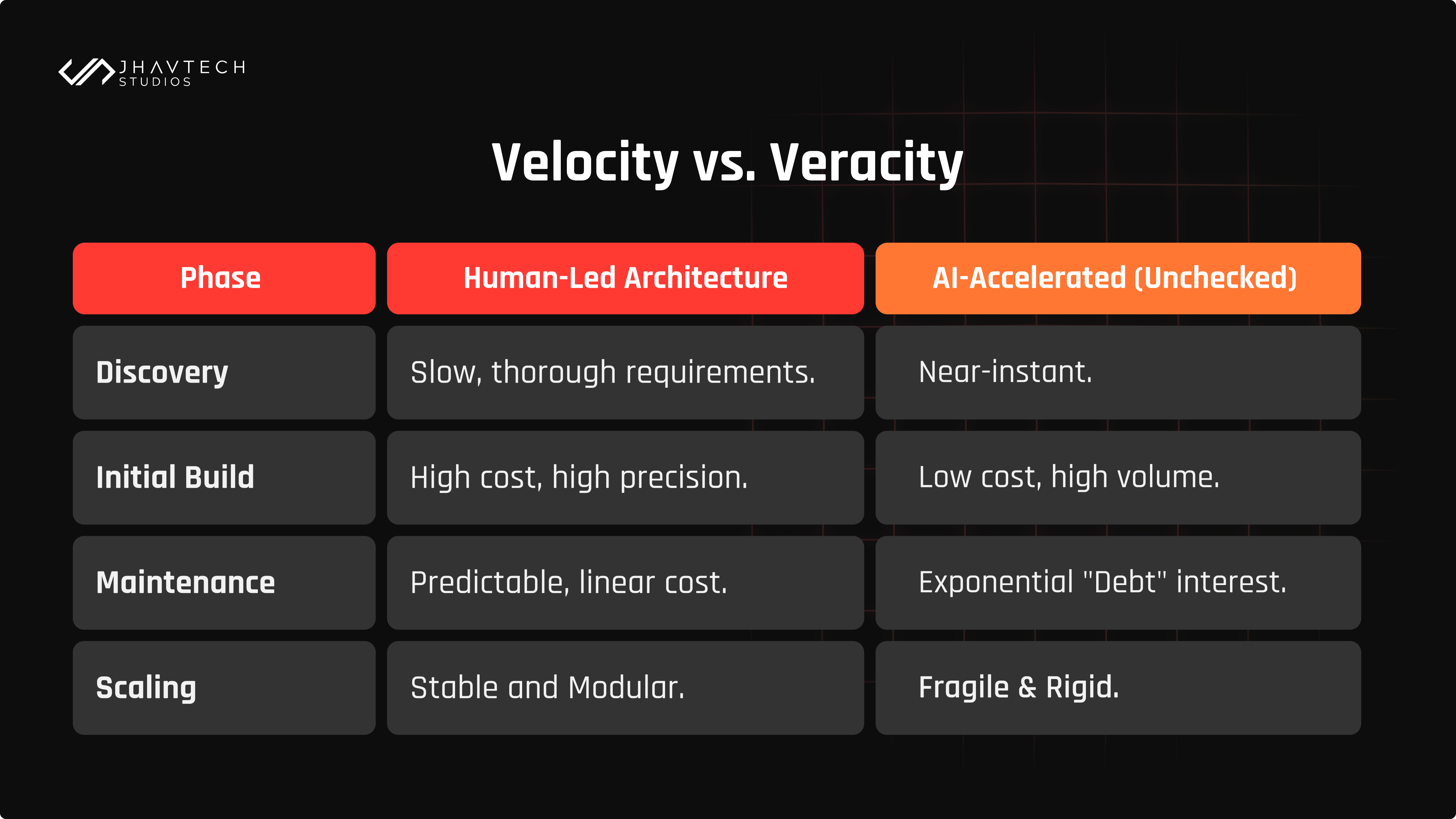

The Economics of AI-Driven Technical Debt

In 2026, the "Price of Speed" is being paid in Refactor Cycles. We are entering what analysts call the 'Year of AI-Driven Technical Debt.' According to Forrester’s 2026 projections, 75% of organizations will see their debt levels spike to critical highs this year as a direct consequence of unmonitored AI scaling.

If you save 100 hours in the initial build by letting AI dictate the system architecture, you typically spend 300 hours six months later during the "Big Refactor." For high-growth firms, this is a silent killer; it slows down the delivery "pulse" and creates a "distributed monolith" that offers all the complexity of microservices with none of the benefits.

The Compliance Gap: AI as a Regional Liability

Architecture isn't just about code; it’s about context. AI models are trained on global averages. They do not naturally understand that a software architecture for an Australian client requires different data-residency standards (like APP compliance) than a social media app in the US.

When AI scales architecture, it scales compliance risks. By the time a senior lead reviews an AI-generated pull request, they may find that the "shortcuts" taken by the model have inadvertently bypassed critical regional data laws or security protocols. This creates a "Compliance Debt" that can have legal and financial consequences far outweighing the initial time saved.

Practical Solutions: Reclaiming Architectural Intent

To prevent your software from becoming a victim of "Fast Bad Scaling," the focus must shift from writing code to architectural auditing. This requires a transition from traditional development to AI-Ready Engineering—a framework formalized in early 2026 to ensure that existing system assets and architectural knowledge are structured in a way that AI agents can accurately process them without introducing structural decay.

To implement this, we must establish new guardrails:

The "Schema-First" Mandate

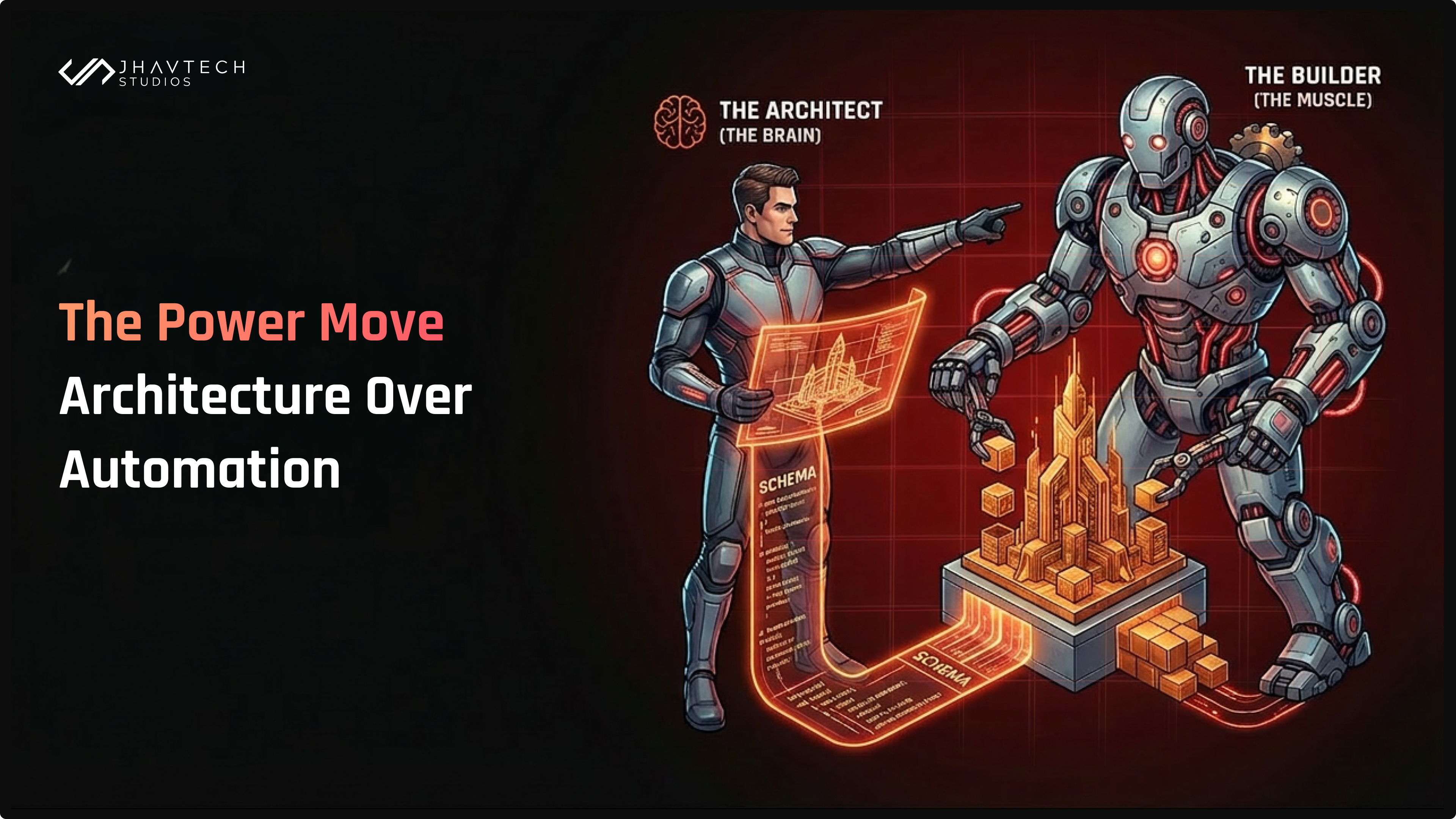

Never let an AI write logic until a human architect has defined the System Schema. Whether it's a GraphQL schema, a Database ERD, or a Class Diagram, the "Blueprints" must remain human-authored. AI should be treated as the bricklayer, never the lead architect.

High-Friction Code Reviews

Increase the "friction" of the review process for AI-generated code. If a developer cannot explain the architectural trade-offs of a code block without re-running the prompt, the code is a liability and should be rejected.

Semantic Documentation

Use AI to document the reasoning, not just the syntax. If an AI-generated piece of code is accepted, it must be accompanied by a human-verified explanation of how it impacts the overall system scalability.

Conclusion: Architecture is Intent, Not Accident

We live in a world that increasingly values speed over stability. AI is an incredible superpower for execution, but it remains a significant liability for architectural intent. Architecture is the art of intentional friction—the process of slowing down to ensure that a digital foundation can actually hold the weight of a growing business.

If we continue to let AI make primary architectural decisions simply because it's "faster," we aren't building sustainable software; we are building digital sandcastles that will inevitably collapse under the weight of their own technical debt. The developers and firms that thrive in 2026 will be those who use AI to move their fingers faster while keeping their minds firmly on the human-led blueprint.

For organizations looking to scale without these risks, partnering with experts who provide professional AI and machine learning development services is the best way to ensure that your innovation is built on a foundation of sound, high-performance architecture rather than generic, unmaintainable code.

Frequently Asked Questions

Q: How does AI-generated code contribute to technical debt?

A: AI prioritizes "syntactic correctness" over "semantic integrity." It produces code that runs but often ignores long-term maintainability, leading to inconsistent patterns and "Shadow Logic" that is difficult to refactor.

Q: Can AI replace software architects?

A: No. While AI can suggest templates, it cannot perform "contextual reasoning." It lacks the ability to account for specific business goals, future roadmaps, and regional compliance requirements like the Australian Privacy Principles.

Q: What is a "distributed monolith" in the context of AI?

A: This occurs when AI generates microservices that are so tightly coupled and inconsistently structured that they behave like a single, fragile monolithic system, providing none of the scaling benefits of true microservices.