I built a tool that automates newsletter curation end-to-end: here's the full breakdown

I built a tool that automates newsletter curation end-to-end: here's the full breakdown

I run a niche newsletter covering AI and developer tools. For the first year, my weekly routine looked like this: open 15 browser tabs, skim Hacker News, check three subreddits, scroll through a few blogs, copy-paste things into a doc, rewrite everything in my voice, publish.

About 4-5 hours every week. Not writing. Not thinking. Just collecting and reformatting.

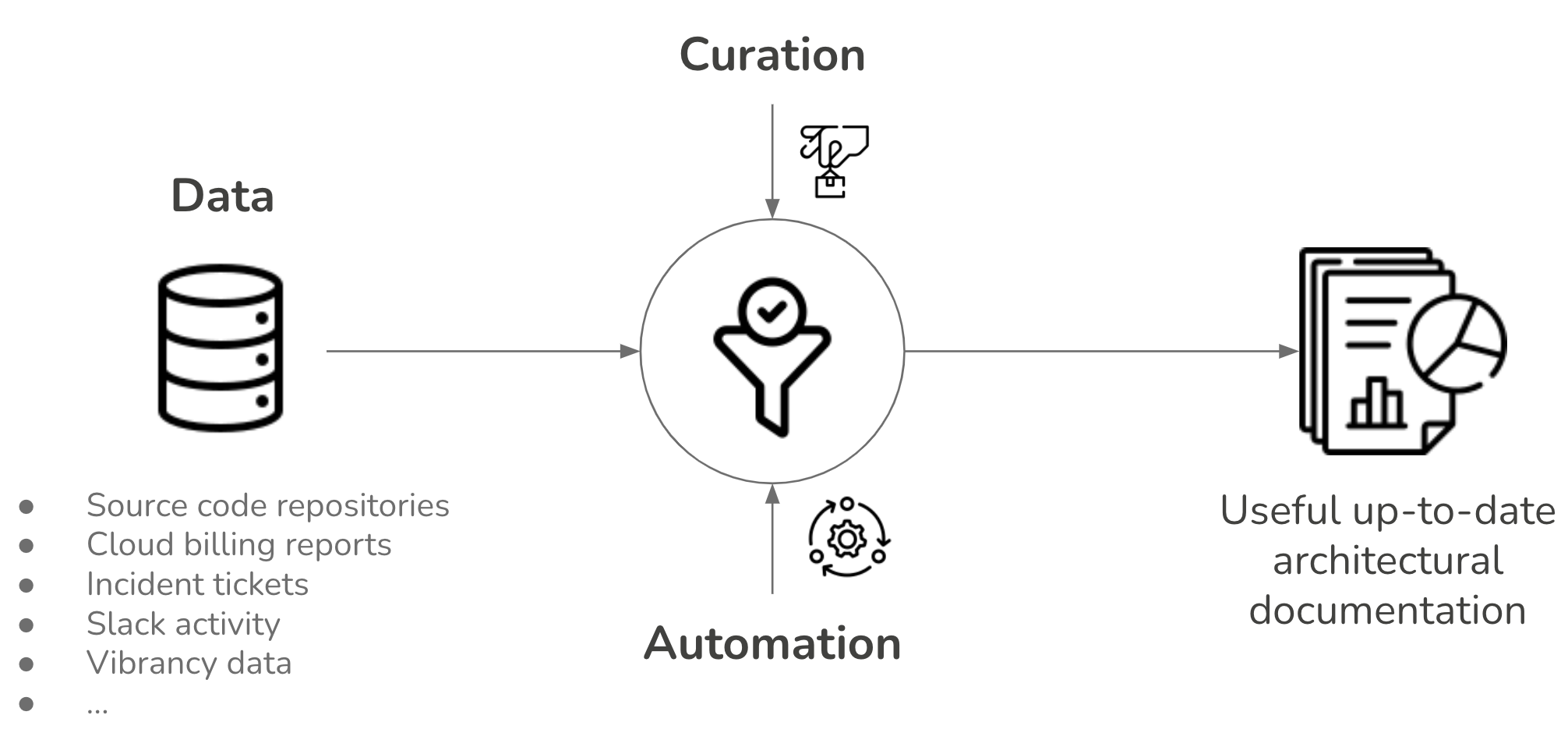

So I spent a few weeks building a system that does all of it automatically. Here's exactly how it works.

The Problem I Was Actually Solving

The obvious answer is "automate the content collection." But that's not actually the hard part.

The hard part is three things:

JS-heavy sites: half the interesting blogs in my niche don't have RSS and render their content dynamically. Basic scrapers return empty pages.

LLM hallucinations: if you dump 30 full articles into one prompt, the model loses context in the middle and starts confidently making things up.

Duplicate content: running this daily means the same article will keep appearing unless you maintain persistent memory of what you've already processed.

Everything I built is a direct response to one of these three problems.

The Architecture: 7 Phases

Phase 1: Ingestion

The engine wakes up on a schedule and works through a list of source URLs.

Every URL gets classified first:

Reddit links (e.g. reddit.com/r/MachineLearning) get automatically converted to their .rss endpoint. Reddit exposes RSS for every subreddit; most people don't know this.

Standard RSS/Atom feeds get parsed directly with feedparser

Everything else routes to a placeholder where I wire in a custom scraper per client

Output is a flat array of normalized dicts: {title, url, published_date, source_label, rss_summary}.

Phase 2: Filtering

Before spending any money on LLM calls, the engine aggressively prunes.

Five filters run in sequence:

Time filter: configurable window (24h / 48h / 72h / 1 week). Anything older gets dropped.

Keyword filter: regex pass against title + summary. Configured include/exclude lists per client. A newsletter about AI drops anything mentioning crypto, for example.

Deduplication: compares every URL against a persistent seen_urls store. Already processed = dropped.

Per-feed cap: max N items per source so one firehose feed doesn't dominate.

Total cap: hard ceiling on items to prevent runaway API costs during heavy news cycles.

This phase alone typically cuts the raw ingestion by 60-70%.

Phase 3: Full-Text Extraction (The Waterfall)

This is the interesting one.

RSS feeds often only include a 2-3 sentence teaser, not the full article. The LLM needs the full article to do useful work. So the engine runs a waterfall:

Step 1: Check if RSS already has enough text. If the summary is over 250 characters, skip scraping entirely. Most of the time this saves the network call.

Step 2: newspaper3k (fast path). A Python library built for article extraction. Works on ~60% of standard sites. Failure is detected not by exceptions, but by output validation: if the returned text is under 250 chars, or contains phrases like "Access Denied" or "Enable JavaScript," it's flagged as failed and the next step triggers.

Step 3: BeautifulSoup (fallback). Standard HTTP request + DOM parse. Strips scripts, styles, nav, and footer tags. Sends the remaining body text to the LLM with no hardcoded CSS selectors, because those break the moment a site redesigns. Checks HTTP status code before attempting parse.

Step 4: Playwright (heavy lifter). If both prior steps fail, a headless Chromium browser spins up, waits for networkidle, then extracts the rendered HTML and runs it through html2text. This handles any JS-heavy site. It's slow and runs last.

Rotating residential proxies sit as a placeholder throughout. Not active yet, but the hook is there for sites with aggressive bot protection.

Phase 4: AI Relevance Gating (Per-Article)

This is the fix for the "lost in the middle" hallucination problem.

Instead of batching all articles into one prompt, the engine processes them one at a time. Each article gets a single prompt that does two things simultaneously:

Relevance check: is this actually about the client's niche? Tangentially related articles get dropped here.

Summarization: if relevant, produce a 2-4 sentence factual summary.

The LLM returns a structured JSON response: {"relevant": true/false, "reasoning": "...", "summary": "..."}.

Articles that come back relevant: false are discarded. What survives is a compact array of clean, verified summaries.

Doing it this way means each LLM call is small and focused. The model never has to hold 30 articles in context at once.

Phase 5: Newsletter Compilation

Once all articles are individually summarized and vetted, the approved summaries get sent to the LLM in a single lightweight batch.

This final prompt is doing the creative work: taking the summaries and compiling them into a newsletter draft that matches the client's exact tone, structure, and formatting preferences. Because the input is already distilled summaries rather than raw articles, the prompt is short and the model stays focused.

If image generation is enabled, this prompt also outputs descriptive image generation prompts alongside the text, one per section.

Phase 6: Image Generation (Modular)

If the Phase 5 output contains image prompts, they get routed to a generation API. Currently wired for DALL-E 3, with a placeholder for Nano Banana (Gemini Flash Image) and Stable Diffusion.

This whole phase is behind an if/else. For clients who don't want images, or want to choose manually, it's simply skipped. The two-version prompt system (with and without image instructions) means there's no wasted token generation for clients who don't need it.

Phase 7: Delivery + State Commit

The formatted newsletter gets pushed to the client's platform. Currently wired: Beehiiv API (creates a draft), local file output. Substack, Google Docs, and AWS SES are placeholders.

After successful delivery, all processed URLs get appended to seen_urls. On the Python/GitHub Actions version, this file gets git committed back to the repo. GitHub Actions destroys local state after every run, so the commit is the persistence mechanism. On the n8n version, it uses n8n's built-in workflow static data store.

What It Actually Replaced

My old weekly process: ~4.5 hours of manual collection, reading, and rewriting.

Current process: review the draft, make edits, hit publish. About 20-30 minutes.

The engine runs on GitHub Actions free tier (2,000 minutes/month). For a daily newsletter, one run is about 3-4 minutes. That's well inside the free tier.

LLM costs per run on 30 articles: roughly $0.2-0.6 using Gemini 2.5 Flash.

The Stack

Python + feedparser, newspaper3k, BeautifulSoup, Playwright

Google AI Studio (Gemini 1.5 Flash) for relevance gating and compilation

GitHub Actions for scheduling and hosting

I'm also working on n8n version [but n8n can't handle JS websites natively so finding a workaround here]

If You Want This Built For Your Newsletter

I originally built this just to get my own weekends back, but the architecture turned out to be incredibly scalable.

I’m hanging out in the comments, let me know if you have questions about the extraction waterfall, handling JS-heavy sites, or how to tune the LLM prompts to maintain your brand voice.

Also is there anything in this architecture you would have approached differently?

What questions do you have about the architecture? Anything here you'd approach differently?